Last Updated 1 week ago by cneuhaus

Its a try ….curious if it will work .. fresh from California…

Always-On Low Power Speech Recognition with Syntiant NDP 101

NDP 101 – fresh from Digitkey – what do I want to now?

I will integrate the Syntiant TinyML NDP101 board with my latest project: GoodWatch – a super smart voice controlled watch.

Currently for GoodWatch I am using an ESP32 as MCU for speech recognition. It is working with 10 numbers and two keywords (yes, no). But – to get good results for so many keywords and to get a quick response – I needed to trick a bit: Its only listening to my words. If you want to learn more on ML for Edge-Device, have a look at: Overview of ML on Edge – Devices .You can see how far I got with an ESP32 here:

With this project I have two objectives:

- Wake up the alarm clock with a key word to set-up the Alarm, get it on 100% voice controlled operation

- Enable speech recognition not only for my voice and reach a highly reliable speech recognition (otherwise setting up the old clock will be faster 🙂

Some facts on the TinyML Development Board

Board Overview

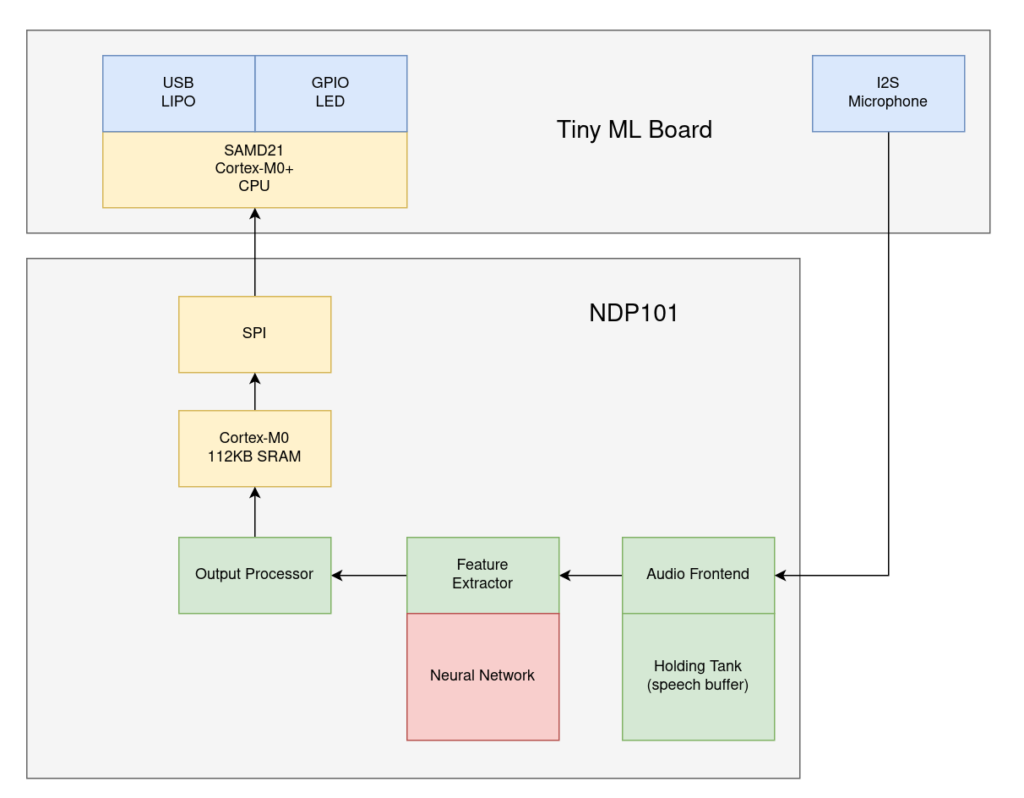

I have ordered the development “Syntiant TinyML”, it is a combination of the NDP101 processor combined with a Cortex-M0+ 32bitARM MCU, a SPH0641LM4H microphone, a LIPO power connector and USB plug- so ready to go, in more detail:

• Neural Decision Processor: NDP101

• Host processor: SAMD21 Cortex-M0+ 32bit low power ARM MCU, including:

◦ 256KB flash memory

◦ 32KB host processor SRAM

• Board power supply: 5V micro-USB or 3.7V LiPo battery

• 5 Digital I/Os compatible with Arduino MKR series boards

• 1 UART interface (included in the digital I/O Pins)

• 1 I2C interface (included in the digital I/O Pins)

• 2MB on-board serial flash

• 48MHz system clock

• One user defined RGB LED

• uSD card slot (uSD card not included)

• BMI160 6 axis motion sensor

• SPH0641LM4H microphoneThe board has two components – a normal low power ARM MCU with USB connection (for communication, control, loading the models…) and a SPI connection to the heard of the system – the NDP101 MCU.

About the NDP1010 MCU: Its main component is a neural network with fixed structure: 3 Dense Layers of 256 neurons and 3 Dropout layers, the input layer must always have 1600 features.

Data recorded from the microphone is directly processed by the Audio Frontent (16 bit) , the “Holding Tank” works as a rolling buffer of up to 3 seconds of speech. The feature extractor performs a pretty much standard MEL (log-mel LMFB) transformation before using the neural network. You can read more about his here.

I guess, the small MCU is used to manage the communication via SPI to the outside world.

Software

The story just started – its available at: https://github.com/happychriss/Goodwatch_NDP101_SpeeechRecognition

This is an ongoing project, I have no clue if it will work out or how long it will take. I will keep posting on the progress – starting the next days with the development set-up and the first steps.